· Jakub · DevOps

Running Ollama on EKS: A Production-Grade LLM Setup with a Custom Helm Chart

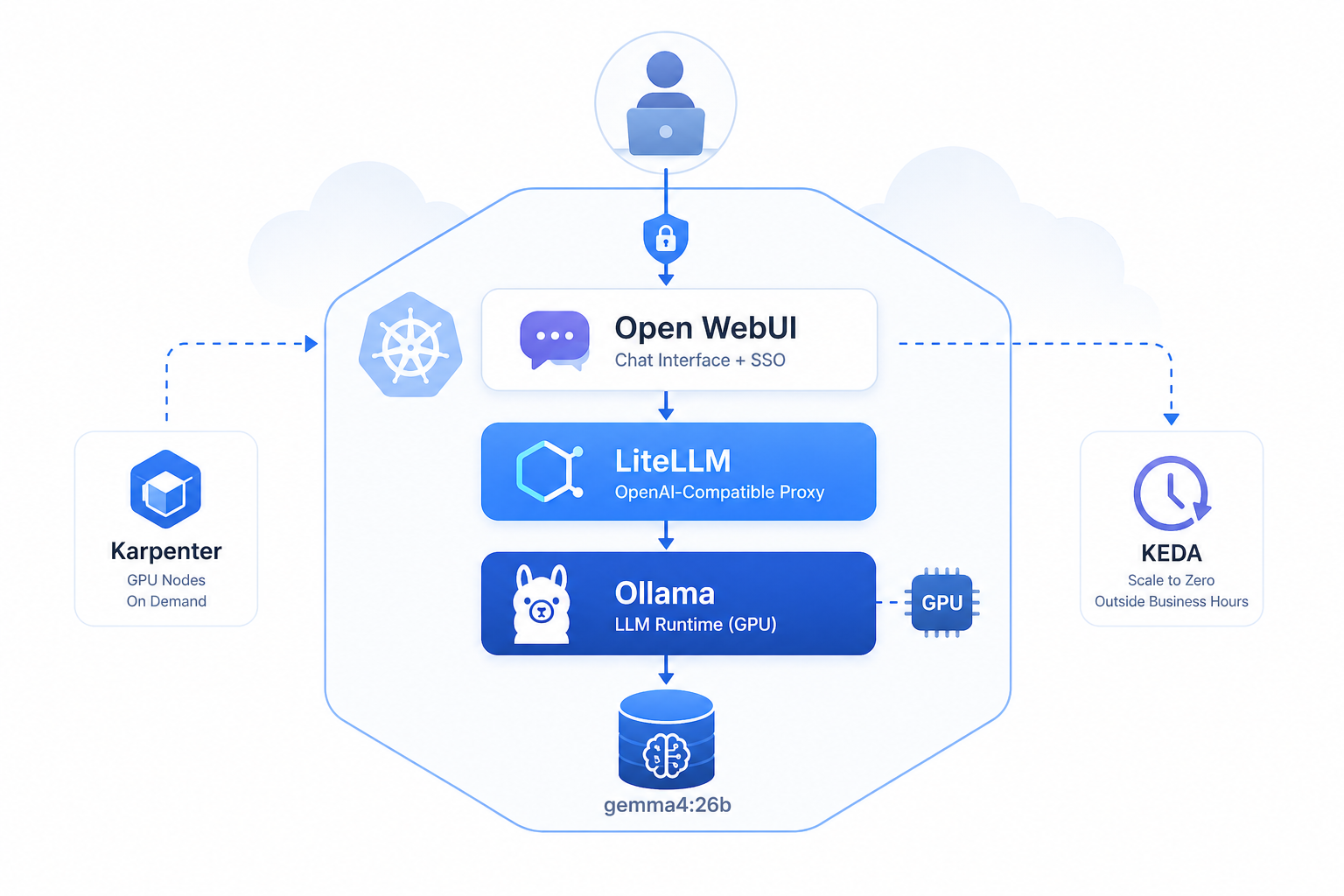

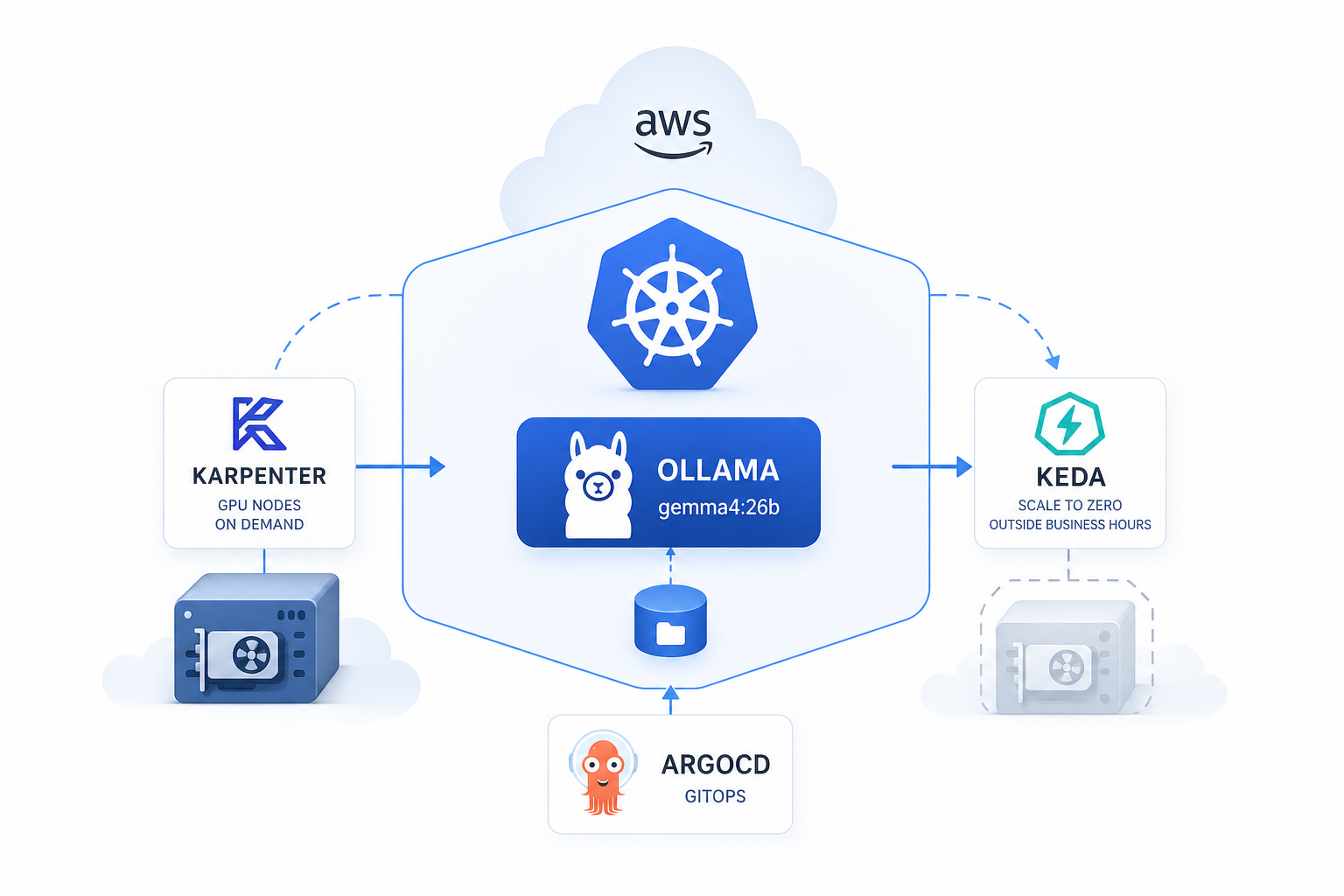

GPU nodes on demand, zero cost at night, models that survive restarts — how I deployed self-hosted Ollama on Amazon EKS using a single Helm chart with Karpenter, KEDA, and ArgoCD.