· Jakub · Platform Engineering · 5 min read

Running n8n at Scale on EKS: Queue Mode, Redis, and External Secrets

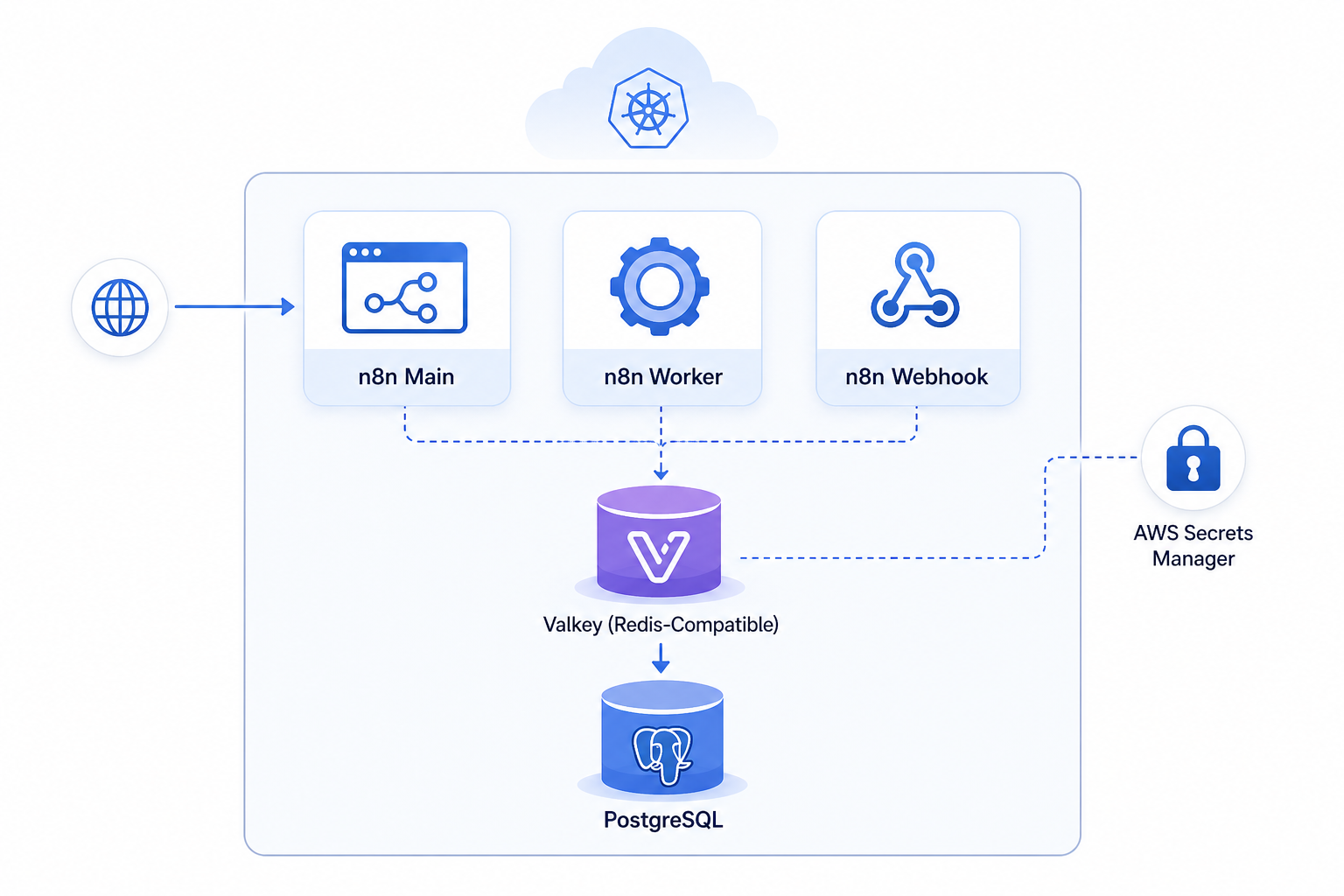

A breakdown of how we deploy n8n in production on Amazon EKS using queue mode, Valkey for Redis-compatible brokering, and AWS Secrets Manager via External Secrets Operator.

Introduction

Workflow automation tooling has become a serious infrastructure concern for SaaS platforms. When n8n moves beyond a single container running on a VM and into a Kubernetes-native deployment handling production workloads, the architecture decisions compound quickly.

This article documents a production-grade n8n deployment on Amazon EKS. The system separates execution concerns across three distinct process types — main, worker, and webhook — backed by a Redis-compatible broker and a managed PostgreSQL database, with secrets sourced directly from AWS Secrets Manager.

System Architecture

The deployment is structured as a Helm release consuming the upstream n8n chart from the 8gears OCI registry, extended with a custom SecretStore and a co-located ocr-service dependency.

dependencies:

- name: n8n

version: "2.0.1"

repository: oci://8gears.container-registry.com/library

- name: ocr-service

version: "0.1.0"Three runtime components are deployed independently:

- Main — the editor and API surface, exposed via ALB ingress

- Worker — consumes jobs from the Bull queue

- Webhook — handles inbound HTTP triggers without contending with the main process

All three share the same extraEnv block via YAML anchors, ensuring environment parity across process types.

A Valkey instance (Redis-compatible) runs as a Deployment (not a StatefulSet) with persistence disabled — appropriate for a queue broker where durability is handled at the job level, not the broker level.

Runtime & Scaling Behavior

Execution mode is set to queue, which decouples job intake from job execution:

config:

executions_mode: queue

queue:

health:

check:

active: true

bull:

redis:

host: n8n-valkey

port: 6379Workers pull jobs from Bull queues backed by Valkey. The webhook process handles inbound triggers independently, avoiding head-of-line blocking on the main process during execution spikes.

Binary data is stored on the filesystem (N8N_DEFAULT_BINARY_DATA_MODE: filesystem) with persistence enabled on both worker and webhook deployments. This matters for workflows that process files — without persistent volumes, binary data is lost on pod restarts.

Secrets Management

Secrets are injected at runtime via the External Secrets Operator. A SecretStore resource bridges Kubernetes to AWS Secrets Manager using IRSA (IAM Roles for Service Accounts):

spec:

provider:

aws:

service: SecretsManager

region: eu-central-1The service account on the main deployment carries the IAM role annotation:

serviceAccount:

annotations:

eks.amazonaws.com/role-arn: "arn:aws:iam::XXXXXXXXXXXX:role/stage-jakops-n8n"Database credentials, the encryption key, and connection details are consumed via secretKeyRef mounts — none are baked into the image or the Helm values.

Key Engineering Decisions

Queue mode over single-process: Running n8n in single-process mode is operationally simple but creates contention between the editor, webhooks, and execution workers. Queue mode isolates these concerns and allows independent scaling of each component.

Valkey over managed Redis: A lightweight in-cluster Valkey deployment avoids the cost and operational overhead of a managed Redis instance for a queue broker workload. Given Bull’s at-least-once delivery guarantees, broker-level persistence is unnecessary.

ALB with group annotation: The ingress uses a shared ALB via alb.ingress.kubernetes.io/group.name, consolidating load balancer costs across multiple services in the same cluster rather than provisioning a dedicated ALB per service.

IRSA for AWS credential access: Rather than mounting static credentials, the deployment uses pod-level IAM role assumption via IRSA. This is reflected in the AWS_STS_REGIONAL_ENDPOINTS: regional setting, which avoids routing STS calls through the global endpoint — a relevant concern in eu-central-1.

Trade-offs

Optimized for: operational isolation between n8n process types, cost-conscious shared infrastructure (ALB grouping, in-cluster broker), and secrets hygiene via ESO.

Sacrificed: broker durability (Valkey runs without persistence), which means in-flight jobs can be lost during a Valkey pod restart. This is an acceptable trade-off when workflows are idempotent or retry-tolerant.

TLS termination is handled at the ALB with HTTP→HTTPS redirect, while NODE_TLS_REJECT_UNAUTHORIZED: "0" and DB_POSTGRESDB_SSL_REJECT_UNAUTHORIZED: false are set for downstream connections. This is a deliberate internal-network trust decision — the ALB is configured as internal, not internet-facing.

Cost & Operational Impact

The ALB group pattern (alb.ingress.kubernetes.io/group.name: "jakops-stage") means this service shares an ALB with other workloads in the same environment. At ~$20/month per ALB in eu-central-1, this has meaningful impact at scale across many services.

Running Valkey as a Deployment with persistence.enabled: false eliminates EBS volume provisioning for the broker — a small but real cost reduction with no functional downside given the workload characteristics.

Conclusion

Deploying n8n in production on EKS requires more than lifting the official container image into a cluster. Queue mode with separated worker and webhook processes, IRSA-based secrets management, and shared ALB infrastructure form a cohesive system that scales cleanly and operates securely.

The key insight: treat n8n like any other stateful, multi-process application. The process boundaries, credential boundaries, and persistence boundaries all deserve explicit design — not defaults.

Need Help Running n8n in Production?

If you’d rather skip the trial-and-error and get a production-ready n8n deployment from day one — that’s exactly what I do at jakops.cloud.

I can help you with:

- Deploying n8n on EKS in queue mode with separated main, worker, and webhook processes

- Setting up Valkey or Redis as a Bull queue broker in your cluster

- Integrating External Secrets Operator with AWS Secrets Manager via IRSA

- Helm chart customization and multi-environment workflow automation deployments

- ALB ingress consolidation and cost-conscious shared infrastructure design

📩 Book a free 30-minute infrastructure audit — I’ll review your current setup, identify the gaps, and tell you exactly what needs to change.

No fluff. Just actionable advice from someone who’s done this in production.

jakops.cloud — AWS & Kubernetes DevOps services